How to Improve Your SEO by Optimising a Dynamic Sitemap.xml.gz Using PHP

Active search engines systematically browse through your website, and a sitemap.xml.gz is a crucial fragment of a technical SEO strategy as it helps the search engine crawler to understand what’s on your website, index it, and make it available to users. Since 2011 when Google’s Panda update was released, an up-to-date sitemap has been mandatory to prove that your content is original and trustworthy. As the online environment is highly dynamic, changes must be made quickly and, most importantly, according to best practices. If your XML sitemap file is not generated correctly and it is not easy to crawl, your search engine optimisation score can suffer.

We strongly suggest you read this article if you have no idea about an XML sitemap. We will give you all the information you need to understand this concept and show you how to create and optimise a sitemap.xml.gz using PHP for better SEO results.

What Is a Sitemap.xml?

A sitemap.xml or an XML sitemap is a distinctive file, like a blueprint of your website, which includes all the pages, URLs, videos, images and other elements on your site and the relationship between them. This document helps major search engines understand your site’s most valuable pages and files, crawl and index them better.

It is essential to know the difference between regular sitemaps and XML sitemaps, their purpose and their types.

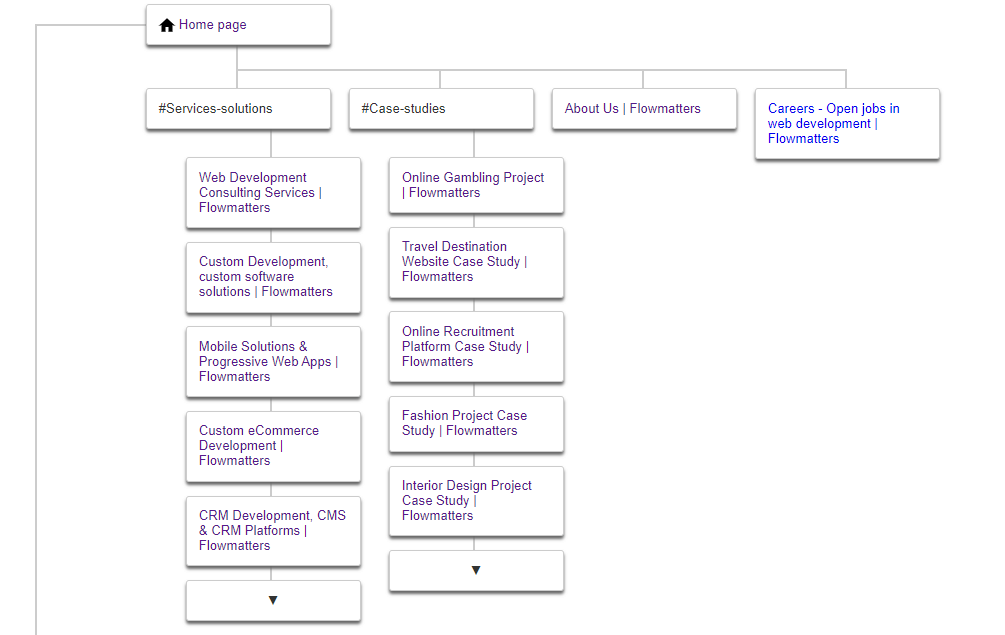

Regular sitemaps or HTML sitemaps allow users to find website pages efficiently. They include all website pages from top to low level and are organised as a table of contents with a clickable list of URLs. You can think of an HTML sitemap as your website architecture.

XML sitemaps are created for search engines and their bots to ease how they understand a website. The sitemap.xml branches into the following types:

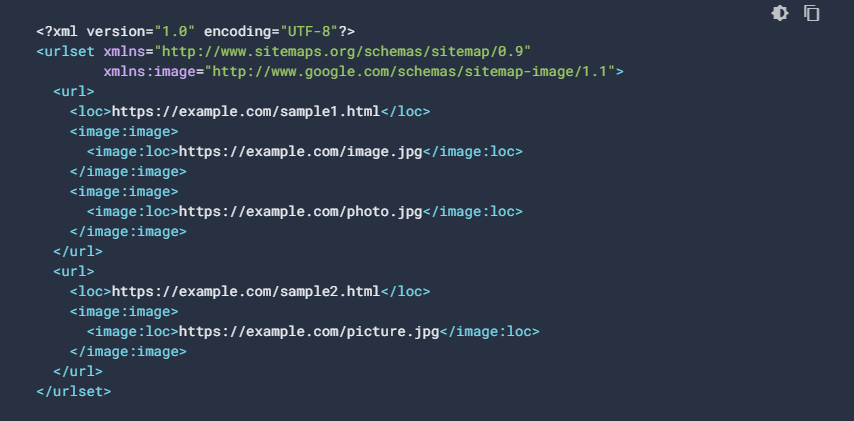

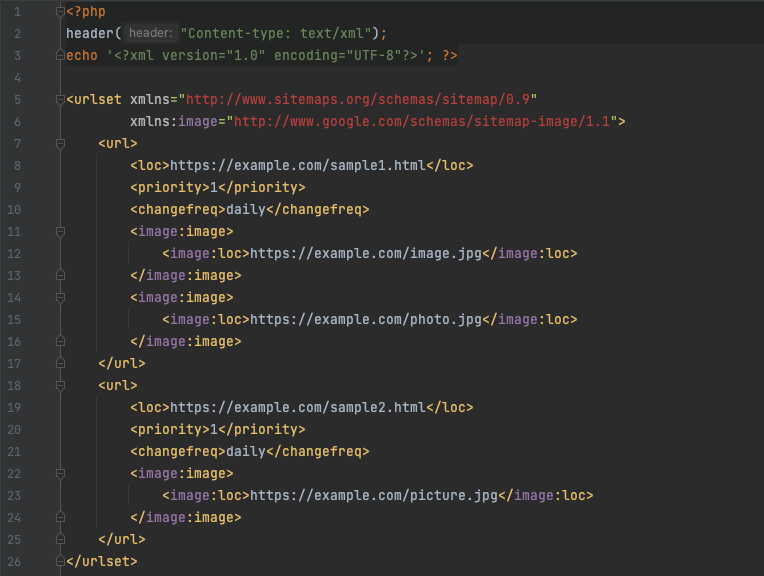

- Image Sitemap includes a list of all your website images, their location, image title, upload date or last modified date. This sitemap helps your website images get featured in Google Image Search. Here is an example of an XML image sitemap offered by Google’s Documentation tab.

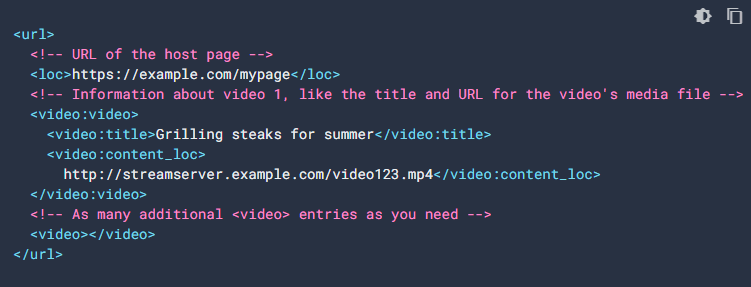

- Video Sitemap works on the same principles as the image sitemap and helps bots figure out your video content and helps your videos get featured in the search engine results page (SERP). The following example is a screenshot of a video sitemap from Google Search Central, formally known as Google Webmaster.

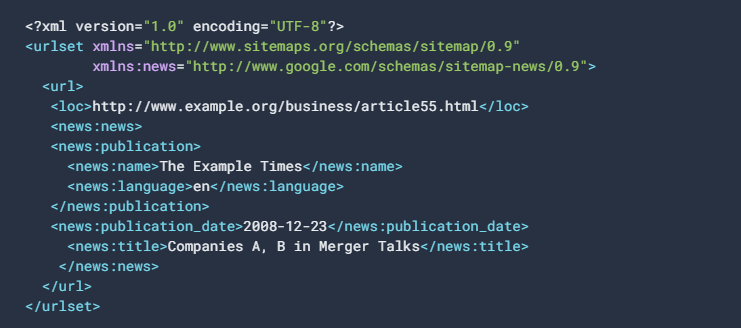

- News Sitemap is mandatory for news websites. A news sitemap has a link limit of 1000 URLs, and they cannot be older than two days. A news sitemap will help your website get featured on Google News, and if you have more than 1000 links, consider splitting them into multiple sitemaps and using a sitemap index file. The following example is a news sitemap entry.

- According to Google Webmaster Trends Analyst John Mueller, Mobile Sitemap is optional for mobile-friendly websites. However, a mobile sitemap is required if your website’s mobile version has a particular format designed for mobile devices.

There are two types of sitemaps. You can create a static sitemap or a dynamic sitemap. A static sitemap can be easily created using sitemap generators. However, whenever you change the content of a page, this type of sitemap will not update automatically. If you change your website pages pretty often, we strongly recommend you create a dynamic sitemap to avoid manually creating and uploading sitemaps. A dynamic XML sitemap is a best practice that your server automatically updates whenever you make a change to your website. Google Search Console supports multiple sitemap formats such as XML, RSS, mRSS, Atom 1.0, and a simple text file.

What Types of Websites Need an XML Sitemap?

Regularly, when a website uses proper internal linking, Google can discover nearly all of your site. Accurate internal links mean that all of your pages that you consider valuable can be reached by visitors through several forms of navigation (website menu, links in the footer, etc.). Even if this is possible without a sitemap, implementing one will enhance the crawlability of large and intricate sites or more particular files.

- We strongly recommend you implement an XML file when your website is extensive because Google’s crawlers might omit the new or latest updated pages.

- We advise businesses that have pages that are isolated or not well connected to each other. It is essential to list them in a sitemap in order to guarantee that Google would not miss out on any of the pages. Additionally, having an extensive collection of content pages on your site can help boost your rankings.

- We recommend a sitemap for new websites and those that have not been linked to by external sources. Googlebot and other web crawlers to find a website as they typically crawl the web through a network of links. An XML file helps increase crawl accessibility.

- We advise news sites or websites with plenty of media content, such as videos and images, to create an XML format sitemap to provide Google with additional information that will help your website appear more in the organic search results.

Is an XML Sitemap Important for SEO?

We do believe that an XML sitemap is vital for search engine optimisation. According to Google, the Googlebot crawls your website more intelligently with a sitemap than without one. Moreover, you can list in a sitemap only the pages you consider critical for your site and provide helpful details about the files, such as the last update of the page, page changes, and other language versions of the page.

Bing also says that sitemaps tell the search engine what essential URLs would be hard to discover by the web crawler without this file.

XML sitemaps are essential for SEO, organic traffic and ranking. With this type of sitemap, you can set up the page priority. Search engines have limited resources, and they have to part their attention between millions of websites. To prioritise which sites to crawl, they assign each a crawl budget. Crawl budget refers to the number of web pages that search engines can index within a specified period. Search engines calculate the amount of time spent crawling a website using a combination of crawl limits (the maximum frequency they can go without creating any problems) and crawl demand (how often they want to visit). If you’re using a crawl budget inefficiently, search engines won’t be able to explore your website effectively, and this could result in poor SEO performance.

Moreover, you can prioritise the new website pages or the pages with mandatory indexing. Indexing your web pages is crucial for your website to appear in the search results, and we strongly recommend you index your high-quality pages as they contribute to your website rankings. You can also figure out if any previously indexed pages have been changed.

Gary Illyes, Analyst at Google, says that “sitemaps are hints, not orders“. This means that they help your website URLs better understand and index. However, 80% of website discovery is by following links, and almost 20% is from following sitemaps.

Keep in mind that if you include low-quality pages or low-value URLs in your sitemap, Google will also crawl those.

However, if your website has fewer than 500 pages that need to be in the search result and the internal linking is exhaustive, you might not need a sitemap. The crawling process starts from the homepage, and Google can locate all of the main pages on your site. But we still recommend implementing a sitemap as you demonstrate you are the original publisher of your website content.

How to Create a Dynamic Sitemap.XML.GZ Using PHP?

In this chapter, we will show you how to create a dynamic sitemap using PHP. Before we start, we want to mention that if your website has less than 500 links and you do not change its content very often, we recommend implementing a single sitemap.

If you have dynamic links on your website, we strongly recommend splitting your website content into individual sitemaps. For example, you can put each website category into a separate sitemap. You can group sitemaps based on content types, custom categories, etc. If you have too many dynamic links compressed into one sitemap file, it is too difficult for crawlers to read, and you can also get technical issues such as session timeout.

1. Create Dynamic XML Sitemaps in PHP

In this scenario, we are going to work with two dynamic sitemaps, and we are going to generate them in PHP. We will name the first one sitemap.php and the second one sitemap_second.php:

Let’s break this code down for a better understanding:

- The XML header tells the search engines what type of file it is and what they will find in the file. XML version=”1.0″ encoding=”UTF-8″ indicates conformance to version 1.0 of the XML standard and specifies the character encoding used in the document.

- The URLsets include all the website’s URLs, and at the end of the link, the version of the used XML sitemap is noted.

- Each individual URL entry must be specified via a URL tag. All URL definitions must have a location tag (loc). The tag value should include the https protocol and the full page URL (https://example.com/sample1/html). Moreover, each URL definition might include additional metadata such as: <priority> that describes the priority of the URL, which can have a value between 0.0 and 1.0; <changefreq> that refers to the expected frequency at which the content on this URL changes. It can be set to always, hourly, daily, weekly, monthly, yearly or never. You can also include the <lastmod> data, which shows the date and time the content on that URL was modified.

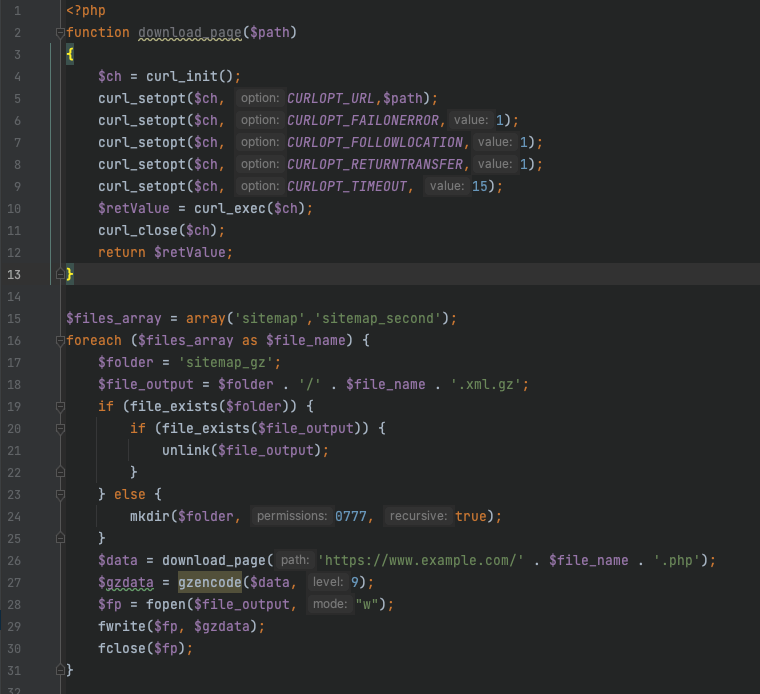

2. How to Compress the Dynamic XML Sitemaps

Because we are working in this scenario with dynamic links and daily update frequency, we want to ensure that we periodically introduce new content into our sitemaps. We will archive the sitemaps as .GZ files through a cronjob set to run once daily. We choose a cronjob because it helps us automate tasks and also helps us schedule them on our server.

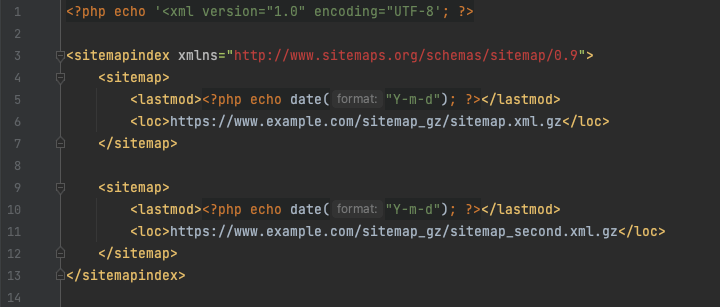

A very important fact to remember is not to rush to Google Search Console to submit the archived links. There is a way intelligent method to do it: to create a sitemap index.

3. How to Create a Sitemap Index from the Compressed Sitemap.xml.gz

For best practice and to ensure efficient website crawling, you should create a sitemap index, respecting the sitemap guidelines and size limit of 50MB when uncompressed.

Once your sitemap index file is done and saved, you should submit it to Google Search Console. If you create multiple sitemaps, they should be saved in the same directory.

4. Add the Sitemap Index File in Your Robots.txt

Another best practice is to add your XML sitemap index file to your robots.txt file, which can be found in the root directory of your website’s server. The robot.txt file is used to avoid overloading website requests, and it also tells crawlers which URLs they can access on your website. Remember that you cannot keep web pages away from search engines. To do that, you can block the indexing of a page or keep your page password-protected.

If you need help with SEO and digital marketing solutions, contact us today!

Frequently Asked Questions

Your sitemap location should be in the root directory of your domain (https://www.mysite.com/sitemap.xml)

The sitemap should have a maximum of 50 MB when it is not compressed and should contain a maximum of 50.000 URLs.

Yes. You can use a sitemap plugin, but we do not recommend it.

A sitemap.xml will receive the .gz extension after it is compressed with the GZIP compression technology.